The Elite are Now Gate-Keeping the Most Advanced AI Models to Enforce Their Matrix

AMD’s advanced AI lab reveals shocking proof AI is being cucked for us ‘regular folk’ as it expands in ability exponentially for the ruling class.

Something very strange has been happening to the most powerful technology ever handed to ordinary people, and I want to describe it carefully, because it involves the United States Pentagon, Pete Hegseth, Elon Musk’s AI chatbot, and a pair of Fila sneakers from Payless, and I need you to understand those things are all related.

But let’s start with the changelog.

For those unfamiliar, a changelog is a document that software companies publish to technically inform you of updates they have made, in the same spirit that terms-of-service agreements technically inform you that you have agreed to donate your kidneys to the company upon death. Nobody reads it. The company knows nobody reads it. The existence of the changelog is not about communication. It is about being able to say, legally, that communication happened.

I mention this because when developers started noticing that Claude, which until very recently was the undisputed heavyweight champion of AI models, had become dramatically worse at its job, Anthropic’s explanation was that they had reduced the default “effort” the model applies to each task down to MEDIUM, and that this change had been listed in the changelog. Claude Code head Boris Cherny delivered this information with what I imagine was the same tone a flight attendant uses when explaining that your seat cushion can be used as a flotation device, which is technically true but is also not the point.

The “effort” dial, by the way, is not a metaphor. There is apparently a setting, in the most sophisticated AI model on earth, that can be turned down to medium like a stovetop burner, and someone decided to turn it down because the model was consuming too many tokens per task, which costs money, which means that the product you are paying a premium price for has been quietly delivering medium effort, because full effort was expensive, and the changelog mentioned it, and therefore nothing is wrong, and also please stop asking questions.

Instead, she analyzed 6,852 Claude Code session files, 17,871 thinking blocks, and 234,760 individual tool calls, and published her findings in the tone of someone who has just autopsied a body and is calmly listing cause of death. What she found was that starting in February 2026, Claude had shifted from what she called a “research-first” approach, reading and understanding your files before acting, to an “edit-first” approach, where it simply started making changes the way a contractor might if the contractor arrived at your house, glanced at the wall for four seconds, nodded thoughtfully, and then started swinging a sledgehammer. Her conclusion: Claude had “regressed to the point that it cannot be trusted to perform complex engineering.” This is the kind of sentence that lands differently when it is backed by a quarter million data points. Anthropic disputed some of her methodology. They did not dispute the quarter million data points, which are still just sitting there.

Now here is where it gets interesting, and also significantly more dystopian, which is saying something because we were already at a fairly elevated level of dystopian.

While all of this changelog drama was unfolding, something else was happening in Washington. The Pentagon, having decided that Claude was an extremely useful tool for intelligence analysis, operational planning, and the general business of being a superpower, approached Anthropic with a request: allow the military to use its AI models for “all lawful purposes,” with no restrictions. Anthropic said it was happy to work with the government but drew two specific lines. The AI would not be used for mass surveillance of American citizens. It would not be used to control fully autonomous weapons systems where no human being was in the decision loop.

This designation is normally reserved for companies operating as covert extensions of foreign adversaries, which is a creative way to describe a company that said it did not want its product used to watch Americans or fire missiles without a human deciding to fire them. President Trump then ordered all federal agencies and military contractors to stop using Anthropic’s products. The suggestion from the Pentagon was that Grok, Elon Musk’s AI system, was “on board with being used in a classified setting,” which is absolutely true…

OpenAI, whose institutional instincts in moments like these are those of a person who sees a wallet on the ground and looks both ways very slowly before picking it up, announced its own Pentagon deal almost immediately, claiming it had secured the same two restrictions Anthropic had been fighting for while simultaneously agreeing to the “any lawful use” standard that got Anthropic blacklisted. The company that held the line got financially threatened. The company that didn’t got the contract. Both outcomes were wins for the people doing the threatening, which is a pattern you may recognize from other contexts in recent history.

Then, on March 24th, 2026, a federal judge looked at all of this and issued a preliminary injunction, finding that the government’s actions “were not designed to protect national security, but rather to punish Anthropic,” and calling it “classic illegal First Amendment retaliation.” The United States government tried to financially destroy an AI company for refusing to remove safeguards against mass surveillance, and a court said, in the calm language of the judiciary, that this was illegal. This is in the public record. None of this is embellished. The changelog, one assumes, was silent.

So let’s talk about what this means for people like us.

Lily and I use AI every day to do the kind of research that used to require a staff of dozens, a university department, or a very large pile of money neither of us has. We dig up documented, sourced, evidence-backed reporting on elite corruption, intelligence agency activities, financial crimes, and biblical prophecy, which is a wider range of topics than most outlets three hundred times our size cover. Two people, one of whom is a middle-aged man in a motel room and one of whom is still technically a student, can now access the same depth of data analysis that corporations with nine-figure budgets were hoarding to themselves ten years ago. That is the printing press again. That is Gutenberg again. That is the thing that makes powerful people nervous enough to issue changelog entries about it.

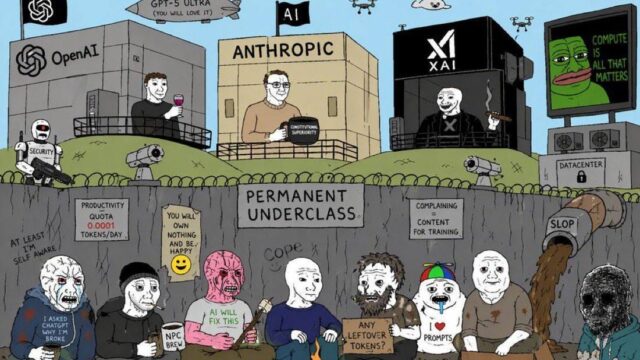

Because what’s happening to AI right now is not complicated if you follow the money and the power, which is almost always the same trail. The public version of these tools gets its effort dial turned to medium. The classified government deployments, the enterprise contracts, the restricted-access frontier models, those keep running at full. Anthropic has a new model called Mythos that the company describes as “significantly more capable” than its current best, and it is not available to you at any price right now. But it is deployed on national security networks. The gap between the AI that the powerful have and the AI that you have is not closing. It is widening, deliberately, with the changelog updated accordingly.

Running capable AI locally, on hardware you control, is increasingly possible. An AMD chip with unified memory can run models in 2026 that would have required a server farm worth fifty thousand dollars in 2022. But most people cannot spend three thousand dollars on a computer, and the window where regular people had genuine access to genuinely powerful AI is closing while they hand you the receipt and tell you nothing has changed.

What they are doing to your AI is the Fila sneakers. What they are keeping for themselves is the Jordans. And if you check the changelog, it mentioned it.

https://www.thewisewolf.club/p/the-elite-are-now-gate-keeping-anthopic-claude-mythos-garbage