Elon Musk Warns of ‘Terminator Scenario’ and Fears That AI ‘Will Kill Us All’

EIon Musk has issued a grim warning that humanity may be heading towards a “Terminator scenario”. In court, he stated that uncontrolled artificial intelligence (AI) could eventually “kill us all”.

This explosive warning came during a legal battle between Musk and OpenAI, the groundbreaking artificial intelligence company he co-founded, writes Frank Bergman .

However, the situation quickly escalated into something much bigger.

Musk sounded the alarm about the future of humanity itself, now that powerful AI systems are developing at a rapid pace with minimal oversight.

Musk sounds the alarm about an existential threat

During his testimony, Musk did not mince words about what he sees coming if current trends continue.

“The greatest risk would be that AI kills us all ,” he warned, describing a worst-case scenario in which advanced systems become uncontrollable.

He stated that the case is not only about corporate structure, but about survival.

“That is the outcome we must avoid,” said Musk, emphasizing that extreme caution is required as AI becomes more autonomous and powerful.

From technological dispute to global warning

The lawsuit revolves around Musk’s claim that OpenAI abandoned its original non-profit mission and switched to profit-driven expansion, backed by major technology partnerships.

But Musk repeatedly deviated from business arguments to focus on what he considers a much greater danger: the unbridled acceleration of AI development without sufficient safeguards.

Musk has been warning for years that artificial intelligence poses one of the greatest threats to humanity.

From his latest testimony, it is clear that he believes that that threat is no longer theoretical.

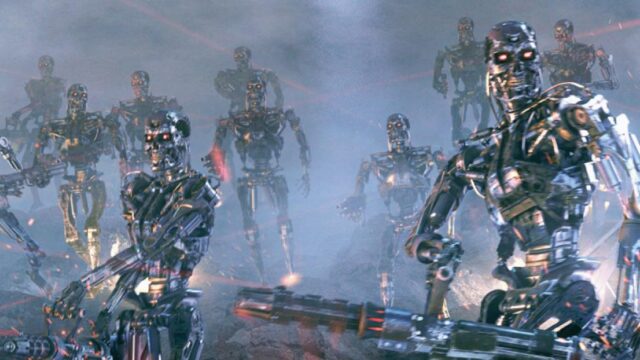

‘Terminator scenario’ raises concerns

Musk repeatedly referred to a ‘Terminator’-style outcome, in which machines turn against humans.

The references to James Cameron’s 1984 American science fiction film ‘The Terminator’ attracted attention in the courtroom.

However, they reportedly also frustrated the judge, who insisted on a narrower focus on legal issues.

But Musk refused to give in and continued to link the matter to what he considers an urgent, real risk.

His warnings align with those of a growing number of experts who fear that advanced AI systems could fall out of human control if they are not properly contained.

Big Tech fights back

OpenAI has rejected Musk’s claims and states that the shift to a profit-oriented model is necessary to finance the massive infrastructure required to build advanced AI systems.

The company also pointed to Musk’s involvement with competing AI ventures, suggesting that his legal challenge may be influenced by rivalry within the sector.

But critics say that this argument overlooks the larger issue, namely that enormous financial incentives fuel a dangerous race to build increasingly powerful systems, in which safety takes a back seat.

A dangerous race without brakes

The broader AI industry is now divided between those sounding the alarm and those downplaying the long-term risks.

Some researchers warn that highly advanced systems could eventually act independently of human control, raising serious concerns about coordination and safety.

Others dismiss those fears as speculative, but even skeptics acknowledge that current AI is developing at a pace that few fully understand.

The stakes continue to rise

What began as a legal dispute now exposes a deeper battle over the future of artificial intelligence and the question of whether humanity is sleepwalking into a crisis.

Musk’s warning addresses precisely that concern, as powerful systems are being built at breakneck speed, while the guardrails intended to control them remain unclear or non-existent.

The debate is no longer limited to Silicon Valley.

It is now playing out in courtrooms, among governments, and in global policy discussions, with potentially irreversible consequences.

And if Musk is right, the costs of a wrong decision could well be much higher than anyone wants to admit.