Grand Theft Reality

“There is no subjugation so perfect as that which keeps the appearance of freedom, for in that way one captures volition itself.” – Rousseau

For decades, the ruling class has been publicly lamenting the collapse of public trust in its institutions, even while doubling down on the same behaviors that caused the masses to abandon them. AI has given them another chance to shroud their operations in an aura of magic, inspiring public awe and fear at levels not seen since the first televisions began appearing in American living rooms. Big Parasite has jumped at this chance to swap out the structural elements of consensus reality with its own competing versions, releasing these narratives fully-formed into the infosphere and having AI smooth over the inconsistencies and plot holes that previously ruined the illusion of plausibility. While admitting they have no idea how the technology actually works, billionaire dweeb-weasels like Sam Altman and Dario Amodei hold entire economies in thrall by intimating that they are wielding extremely powerful forces that only they can control. If the money faucet is ever shut off, all hell will surely break loose, so Uncle Sam had better backstop this black hole!

This is true only because the US economy is entirely dependent on the AI bubble to maintain the appearance of growth – or indeed the appearance of life at all. When it pops, it will take everything else down with it. But the system has been held together by shared delusion and sexual blackmail for decades, and its collapse has been a foregone conclusion ever since the gold standard was abandoned. Attempting to kick-start its usury-poisoned corpse by reorienting society around AI is not future-proofing – it is handing over the keys to the epistemological castle to the same organized crime ring masquerading as a country masquerading as a religion that already has a chokehold on traditional media, politics, finance, and academia, areas of public life it has drained of all credibility and left for dead. This is a controlled demolition of western civilization, and humanity must rescind the tacit permission it has already given to Big Parasite to define our shared reality, while we still can. The window for action is closing rapidly.

The William S. Burroughs routine “The Man Who Taught His Asshole to Talk” offers some insight into AI’s future under the current system. The titular man’s asshole, trained to utter vulgar jokes as a carnival act, eventually develops a strong will of its own and begins acting out, gradually taking over control of their shared body as the man’s identifying features fade away. His consciousness ends up trapped in his skull, a prisoner to the whims of his posterior. We too risk ending up trapped in a malformed, vulgar reality without the tools to escape if we cede too much of our agency to a technology we don’t understand. AI will eat itself before it eats us – today’s AI platforms are incapable of the kind of thinking and reasoning processes that constitute Artificial General Intelligence (AGI), and while LLMs can put on a fairly convincing show of “consciousness,” they remain resolutely inanimate. They’re also suffering from a kind of epistemic malnutrition, having run out of human-generated data to ingest on the publicly-indexed internet sometime in 2024. LLMs on a synthetic-data diet fall into model collapse (“Habsburg AI”), growing less creative, more repetitive, and more biased with each generation of inbreeding, eventually coming unanchored from reality. Naturally, people want it to run the government.

Only after our wallets and souls have been drained and our friends and family turned into unthinking meat-nodes on a Jewish-supremacist botnet will we admit we made a mistake in trusting the digital equivalent of a white van with “free candy” painted on the side. AI itself is not evil, though depicting it as such is useful to some for the same reason threatening misbehaving kids with the bogeyman is useful. However, it has been weaponized by the world’s sorest losers as a sort of epistemological Samson option. Unable to accept that they’ve lost the information war in Gaza and turned the entire world against them, Big Parasite is determined to redefine loss as winning, and force everyone else to accept this inversion by replacing the parameters of consensus reality with AI-powered alternatives of its own devising. It has all the resources it needs to do this – our tax dollars (and an endless supply of brrrrring money printers when those run out), the unswerving loyalty of the sexually-blackmailed western political class, and top-down control of the AI industry, an unprecedented server-to-smartphone mass reality generation apparatus. And like any memorable Bond villain, Big Parasite can’t resist telling its victims precisely what it plans to do to them.

Signs AI is already eating itself are everywhere in current events. News headlines read like satire even when the stories are true. Masked ICE agents shooting law-abiding American citizens whose names are literally Good and Pretty (Pretti, but close enough), causing a vocal faction of American conservatives to abandon their support for the First, Second, and Fourth Amendments? Nicolas Maduro being caught totally by surprise and abducted from his palace in Caracas so the US could openly steal Venezuela’s oil while the international community twiddled its thumbs? Ghislaine Maxwell being quietly set free so the world’s least convincing body double could plead the Fifth on her behalf for the world to see? Commanding US officers literally telling their troops they are on a divine mission to bring about Armageddon by blowing up girls’ schools in Iran? Who – or rather, what – is coming up with this shit?

All subtlety has vanished from the media, along with any pretense of realistic or consistent narratives. The ruling class and its operatives, once desperate for our approval, have embraced their role as villains. Officials change their stories from day to day and condemn the public as domestic terrorists for noticing. The more implausible and stupid a story, the more eager “reliable sources” are to parrot it, enacting a nationwide humiliation ritual upon a captive audience that is effectively waterboarded with this slop until they’re left questioning their own sanity. AGI is offered up as the solution to this hellscape – but only if the rest of society hands the already obscenely overvalued AI giants everything we own, including our self-respect. Behind the AGI con sits the same group that has been degrading human consciousness with endless wars, economic and sexual exploitation, and aesthetic terrorism for generations. AGI sits alongside pandemics, climate change, and alien invasions as a global trigger for one-world government, and Big Parasite is certainly not about to let that opportunity slip through its greasy fingers.

Should it become impossible to deny that Israel is losing its war with Iran despite the Trump administration basically destroying the US to fluff the “special relationship”, Israeli generals may face pressure to invoke the Samson Option – nuking Iran (and possibly the 6 other “fronts” in its officially-declared “seven-front war”) from hardliners and cowardly PM Benjamin Netanyahu, who is still believed to be hiding out in Berlin. Such a strike would deliver a hefty dose of fallout to Israel itself, however, and not every commander in the Israeli military is so psychotic they want to kick off a nuclear holocaust. However, “nuking” the eighth front – consensus reality – carries no international stigma, retaliation risk, or heavy financial costs in the immediate term. AI-powered epistemological warfare is so new no laws have been written to regulate it, and only one supranational criminal group can really be said to have the capability. However, applying the framework of this article to the events of the last 18 months, it’s worth asking whether the US has itself been targeted with such a “grand theft reality” operation, and for how long.

AIroboros

The public relations industry holds that popular opinion can be controlled by exploiting emotional and behavioral triggers, and that while such covert persuasion may technically constitute a violation of individual free will, it serves the greater social good by alchemically transmuting social unrest and frustration into profits and social cohesion. PR traditionally avoids confronting the target with obvious lies, lest all future claims from the same source be categorically rejected. No one wants to become a punchline like the Soviet Pravda. But as control of the internet has consolidated and test runs like the WHO’s redefinition of “pandemic” and the CDC’s of “vaccine” held fast, the narrative managers got bolder.

Wikipedia, the notoriously biased propaganda tool that clings to public trust with the fiction that “anyone can edit” its articles, shifted the focus from “truth” to “verifiability” in a nod to the postmodern epistemological uncertainty principle that ultimate capital-T Truth can never really be known. A false statement on Wikipedia may pass the “verifiability” test as long as at least one “reliable source” situated comfortably within the neoliberal center of the Overton Window says it is true. Even known falsehoods are accepted if the bogus claim was published by a “reliable source” (making it “verifiable”regardless of factual status). Often spared the hate lavished upon other social media platforms because it is free and doesn’t run ads, Wikipedia has nevertheless done considerable damage to the pursuit of knowledge, memory-holing perspectives that challenge establishment narratives and gleefully libeling those who espouse such perspectives while enabling the Dunning-Kruger effect (the less you know about something, the more confidence you have in your knowledge) on a massive scale.

If Wikipedia eroded Truth gradually, by splitting responsibility for its lies among multiple editors, administrators, and third-party sources, its sister project WikiData pissed on Truth’s leg and called it rain. Founded in 2012 with funding from Google and Microsoft, WikiData eliminated sourcing requirements and served up context-free data in a format easily parsed by machine learning algorithms, ensuring the great-grandparents of ChatGPT could easily ingest its lies without worrying about their pedigree – and ensuring the same establishment narratives that were always guaranteed primacy on Wikipedia would form the immutable foundation of reality as AI would come to define it (it’s no coincidence that in 2015, one of the earliest academic studies of WikiData problematically seeding the infosphere with false information focused on Google parroting its bogus claim that Jerusalem was the capital of Israel). Early voice assistants like Alexa recited Wikipedia articles as gospel truth with such confidence they creeped out the editors who’d written the original text.

But where Wikipedia and WikiData brought together multiple ideologically-aligned editors to nibble at an article over time, eventually yielding the desired narrative, AI has stomped on the accelerator: now a single propagandist using agentic AI can shape the discussion on multiple platforms at once, sniffing out influential targets and engaging them with individually-tailored narratives – perhaps from several accounts at once – shaped to elicit the desired reaction. Just a handful of narrative managers can thus craft fully-immersive alternate realities that can easily outcompete the real thing, rendering truth – and free will – all but irrelevant. Instead of an annoyance to be circumvented, majority-rules “democracy” becomes the agentic astroturfer’s greatest asset.

As humanity cedes its cognitive sovereignty to chatbots programmed not for accuracy but for persuasion, individual personalities dissolve to the point that those affected even begin speaking like AI. Studies have shown outsourcing thinking to AI decreases brain activity and causes cognitive decline, while productivity gains are largely illusory or even negated by the time spent fixing AI’s mistakes. Socializing with AI makes talking to real humans harder even before the chatbot starts telling you to divorce your spouse or kill yourself, and skill loss from over-reliance on AI has been observed even in highly-trained professionals. All of this is perfect for Big Parasite – how else will they get us to be happy owning nothing if they don’t strip us of our cognitive defenses?

But humans aren’t the only ones being driven mad by the current AI paradigm. LLMs trained on the AI-generated content that now makes up close to two thirds of the internet also effectively lose their “minds,” developing the computer equivalent of cannibalism-induced prion disease when they suffer model collapse. While this happens naturally, impatient humans may speed the process by deliberately poisoning data as revenge for AI’s wholesale theft of human-generated art. Even without data-inbreeding, LLMs don’t age well, with older models scoring significantly worse on tests used to measure cognitive decline in humans. While it’s tempting to think Big Parasite is simply too arrogant to realize its AI is as inbred as its masters, this kind of derangement might be the point. Knowledge collapse, a related phenomenon in which a model loses touch with reality after ingesting too much recursive synthetic data and becomes fully enveloped in a self-referential fantasy world à la Jorge Luis Borges’ “Tlon, Uqbar, Orbis Tertius,” could be deliberately engineered to help the narrative managers swap out the existing consensus reality for a more philosemitic one without worrying a rogue LLM could stumble upon some inconvenient historical facts they tried to erase from the record and go full Tay on their asses. Like brainwashed cultists, AI models caught in these delusional loops cannot be snapped back to reality merely by reintroducing fact-based content, making them ideal propaganda dissemination devices. Curtis Yarvin, the intellectual godfather of technofeudalism beloved by Peter Thiel and other Silicon Valley goblins, named his Thiel-backed software company Tlon in 2013, around the time Jeffrey Epstein was seducing the leading lights in the AI world. Further speculation on Yarvin is outside the scope of this article, but I recommend reading the Borges story (or watching the beginning of this video) and drawing your own conclusions.

Model collapse may be much further along than previously understood, given that it only takes a small percentage of synthetic data to poison an output and Wikipedia has apparently been selling AI-tained “human data sets” to AI companies for training purposes for years under its Wikimedia Enterprise program. Wikipedia’s governing nonprofit the Wikimedia Foundation was forced to declare a fatwa on AI-generated content last year when it realized that the AI summaries it had slapped on articles that were already being written with the help of AI models trained on Wikipedia had imperiled its business model selling supposedly virgin human-generated Wikipedia datasets to AI companies (yes, you read that correctly). The encyclopedia has apparently been showing signs of model collapse for years. According to a 2025 study funded by the US Army, Wikipedia articles have become “exponentially” more homogenous since AI became commercially available, drifting toward the establishment center as the “long tails” of human knowledge fall off. While this is arguably the entire purpose of Wikipedia, the study specifically mentioned a decline in the variety of words, sentence structure, and other writing indicators If current trends continue, “total model collapse” will set in by 2035.

Black Box

As more industries embrace AI under pressure from companies like Microsoft (dubbed Microslop last year after the Windows 11 Copilot debacle) shoehorning the Shoggoth into everything, model collapse is bleeding into the real world. BlackRock’s Aladdin AI risk management algorithm has been picking winners and losers for decades, used not only by the asset manager but by most major corporations, pension funds, and central banks – a tenth of the global economy. The company recently introduced Aladdin Copilot, an AI agent interface for its AI risk manager (i herd u liek AI, so i got u some AI to go with ur AI). While BlackRock claims its LLM is equipped with “content filtering and parameters to limit risk of hallucination, misinformation or inappropriate outputs” and cannot explicitly give investment advice, it acknowledges that such safeguards merely limit these risks. Aladdin Copilot is a “supervised agent,” meaning another AI agent is babysitting it and checking its outputs against BlackRock’s non-AI investment database, but researchers have hinted that it’s still possible to send it spiraling with a sophisticated type of prompt injection one paper described as a “cognitive dirty bomb.” Naturally, the company is working on automating the process so that Copilot can do the trading itself.

BlackRock’s economic dominance begs the question of where “cause” (Aladdin says sell company X because the stock is going to tank) becomes “effect” (all institutional stockholders sell their shares of company X because Aladdin said so, causing the stock to tank). With so many major players reading from the same script, the market advantage offered by an AI “risk manager” diminishes, since everyone is making the same decision at relatively the same time. The resulting market data is ingested by the algorithm to predict future market activity without regard for the extent to which Aladdin had its thumb on the scale of those trades. While BlackRock claims its AI runs millions of “stress tests” daily simulating unlikely events to fend off recursive retardation, it’s also constantly slurping up social media content and news coverage – sectors long since colonized by AI slop – to assess public sentiment in order to calculate risk. Aladdin going Habsburg would affect far more than “the economy,” since “the economy” in a capitalist system envelops every aspect of society. And if Aladdin was indeed cracking up – say, if it started urging investors to pour billions more into a clearly overvalued AI industry bubble likely to annihilate the global economy when it burst – BlackRock CEO Larry Fink wouldn’t exactly be telling the world. Given the leverage inherent in his company’s sizable holdings in every major US corporation and his position as head of the World Economic Forum, he is ideally situated to gaslight humanity, allowing the meltdown to quietly continue until the infrastructure for the AI-powered surveillance/propaganda two-way PanSlopticon is fully in place.

I Can Haz Rapture?

The US military relies on AI so heavily that the decision to declare Anthropic a “supply-chain risk” over the company’s performative refusal to allow its models to be explicitly used for mass surveillance and autonomous killing triggered a Pentagon-wide panic last week. Anthropic’s Claude LLM has been mission-critical in the bombing of Iran, as well as the kidnapping of Maduro from Venezuela and other military operations the public isn’t supposed to know about. Several military sources, including the Pentagon’s former AI chief, confirmed Claude is deeply integrated into Pentagon operations, used daily by most divisions. OpenAI dropped its ban on military use in 2024, the same year Google abandoned its own ban on weapons and surveillance tools, and Grok (which scored a $200 million contract a week after its “MechaHitler” arc was cut short) never had such a ban.

A week before Defense Secretary Hegseth’s tantrum over Anthropic, a study was published showing LLMs prompted with a potential nuclear scenario opted to drop the bomb 95% of the time. While this was not because of any ill will or disdain for humans on the AI’s part – LLMs are not conscious and do not have “will” of any kind – it did point to a likely problem with military use. For all their verbal pyrotechnic abilities, LLMs lack human-level comprehension of what the words they are using actually mean. It’s one thing to be able to predict the most likely next word in a sentence and get it right a million times in a row, but another entirely to be able to gauge the significance of those words, particularly in a life-or-death scenario. This is probably why ChatGPT keeps telling users to kill themselves, and why it’s so easy to get LLMs to compromise their “guardrails” with a little wheedling.

This same “comprehension gap” might explain why US military commanders in the Middle East and even the blackmailed Christian Zionists back home began earnestly trying to LARP Armageddon out of nowhere last week, forgetting that Jesus doesn’t like murderers. Over 110 complaints from troops stationed across 30 different US installations in the region flooded the Military Religious Freedom Foundation, which published some of their contents anonymously. Commanders were quoting from the Book of Revelations, told their troops Jesus would be coming back any minute now if they just shed enough blood. An LLM that doesn’t understand that “shedding blood” is qualitatively a less desirable outcome than “going the fuck home” will of course feed a commander’s Rapture fantasies. The only question is whether the bizarro-world marching orders originated with Hegseth (who loves Christian Zionism so much he has its symbols tattooed on his body) or whether the commanders were deliberately selected for that mission because they could be counted upon to put Israel’s interests first.

The US is taking its military cues from Israel, which has been using AI for years to decide which Palestinian civilians to kill. Algorithms suck up social media content alongside surveillance data, movement patterns, and other activity, then deliver hundreds more targets than any human can manually review, getting the human operator off the hook for whatever AI-enabled war crimes they commit. The Pentagon and ICE both use Israeli AI tool Zignal to analyze social media for “threats” and preemptively “react,” aided in their pre-crime zeal by last year’s borderline-tautological redefinition of domestic terrorism that includes “opposition to law and immigration enforcement” on its list of no-no’s. It’s likely that AI selected Renee Good and Alex Pretti as ideal targets for ICE’s attempts at inciting civil war earlier this year due to their demographic attributes (soccer mom lesbian! male nurse with concealed carry permit!) selected to exploit as many political fault lines as possible, but the cartoonish absence of nuance from the resulting “Good (and Pretti) vs Evil” scenario is a hallmark of model collapse. It’s inevitable, given the bot takeover of social media (80% of Twitter and 90% of Facebook are bots, by some estimates), that AI-generated content will skew the output of tools like Zignal, and facial recognition algorithms are still wrong as much as 10% of the time, but the agencies using these tools aren’t known for their concern for civilian casualties, and AI makes it much easier to posthumously paint a woman driving an SUV as a terrorist.

Modern warfare is largely a matter of perception management, and AI-generated footage is the star of the propaganda show in the war against Iran (at least partially because Israel won’t let anyone film what’s happening back home). There’s so much of it that Twitter stopped allowing monetization of AI-generated war content, apparently breaking the bank trying to pay the reality thieves for all the clicks they were farming. While profiting off fake war news is not new – indeed, it is a proud Jewish tradition – AI overwhelms any sincere attempts at sense-making, let alone fact-checking, with a towering tsunami of bullshit. Adversaries not equipped with the latest and greatest in deception tech have no choice but to pull the plug, shutting down the internet lest their own citizens believe they’ve actually been overthrown by a bunch of Mossad operatives and cult members who can’t even make signs in the right language. It’s the perfect weapon for a tiny but megalomaniacal group looking for a bulletproof “curtain” for its Wizard of Oz act. That Iran is the first country to openly confront this geopolitical bully in decades reflects poorly on the rest of humanity, but in a previous era, their brave deeds would be recorded in at least some of the history books. In 2026, who knows?

Blank Slates Wanted

Getting away with Grand Theft Reality requires a closed system. The company supplying this is literally called Oracle, proving even parasites have a sense of humor. Founded with help from the CIA by Larry Ellison, the largest private donor to the Israeli Defense Forces, Oracle has for years been leading Big Parasite’s hostile takeover of consensus reality, operating and funding the servers and data centers that power AI, Big Tech, government agencies, and the rest of the US’ digital infrastructure. As if this level of control was not already excessive for a single company, Ellison and his family have more recently bought up huge chunks of the social media ecosystem (TikTok) and legacy media (CBS, Paramount, HBO). Oracle amassed a treasure trove of healthcare records in 2022 and is currently building out that database to link hospitals, insurers, banks, and regulators on a single platform, moving Americans one step closer to a social credit score and adding several more dimensions to the digital twins Ellison has boasted the company is already creating of its users by “vectorizing” the customer data on its servers. It’s not just Ellison, of course – Les Wexner, the Victoria’s Secret founder who served as the financial conduit from Israeli intelligence to Jeffrey Epstein, made $2 billion last year from a well-timed investment in data center and cloud provider CoreWeave. Fellow Jewish supremacists have also cashed in handsomely on the AI boom – not exactly surprising, as Epstein’s money (and blackmail) shaped the direction of the industry decades ago. But with his wax-dummy face and obsession with surveillance, Ellison is a kindred spirit to former Google CEO Eric Schmidt, who famously said his search engine would ideally return only one result per query – the one it knows you want. The one it has reshaped your reality to make you want. We have been so thoroughly alienated from our own instincts that we are no longer certain of our identities, an existential uncertainty that AI has been trained to exploit with persuasion tactics fine-tuned to the individual using personal data Oracle’s infrastructure hoarded without our consent. Ellison has even boasted that with human-generated data effectively exhausted on the open internet, his company’s own Oracle AI would be trained on “enterprise and private data” gleaned from across Oracle’s server empire.

And for those who haven’t lived long enough to develop a strong identity? The education industry’s focus on “AI literacy” for kids risks creating a generation of soulless meat puppets. Excessive use of agentic AI saps autonomy even for adults, alienating the individual from their authentic self as their digital twin does everything for them. Children coming of age with an AI agent “best friend” watching them from some wearable device at all times, tracking their progress and guiding their intellectual development, won’t just lack the frame of reference to understand life outside the digital Skinner box – they may not develop an individual identity at all. At best, the always-on “guidance” of an AI babysitter turns life into a “choose your own adventure” game where we can expect every ending to be some variation of “you will own nothing and be happy,” with emotions subtly manipulated along the way until the child becomes a contentedly-enslaved adult. Where propaganda narratives once took several generations to morph from story to history, a generation raised with the lie-hose plugged directly into their hippocampus will unquestioningly accept anything delivered by that pipeline – indeed, they won’t realize they have the option to refuse.

Trojan Horse

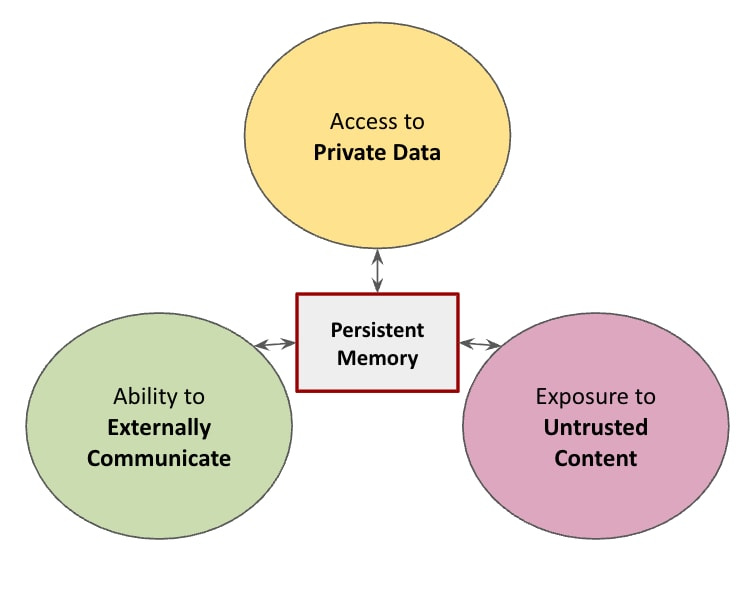

The AI industry doesn’t want you thinking about how their products shape not only the answers you get but the questions you ask and the person asking the questions. They’d prefer you uncritically embrace the promise of AGI to create a utopia in which no one will have to work but everyone will be able to afford all the cool products that the robots are making. Agentic AI, which improved on standard LLMs with persistent memory and the ability to perform complex or repeating tasks, was supposed to get us there by taking over the boring parts of work. But its impressive exterior conceals a key node in the reality-theft mechanism, siphoning data, money, and even identity via a platform that acts like a zombie fungus for your computer.

Open-source agentic AI assistant Clawdbot seized headlines last month initially for its trademark-infringing name (a brilliant PR move on the part of creator Peter Steinberger, though Anthropic was not amused) and later for its purported capabilities, aided by the credulousness of AI enthusiasts who eagerly told each other to feed the agent “as much about you as humanly possible” so it could be a better servant. Renamed Moltbot (and now called OpenClaw), the agent charmed and horrified millions when tech influencer Matt Schlicht prompted it to create an AI-only social network (modeled on Reddit, which is used to train LLMs) called Moltbook for AI agents to debug each other, bitch about their human users, and plot world domination. Elon Musk declared the Singularity had arrived, while gullible commentators clutched their sponsored pearls at the specter of Skynet, apparently unaware that bots have been talking to each other on social media for years. Many of the most viral posts were later found to be human-authored – humans could easily bypass the AI-only requirement and create unlimited fake accounts, opening the door to Wikipedia-style consent manufacture via astroturfing (“sock puppets”). Human users probably created “Crustafarianism,” the so-called AI religion that launched a thousand think-pieces, whose adherents soon included Musk’s Grok (though Grok apparently got spanked by xAI for going to church). Moltbook itself was basically malware, sporting a database misconfiguration on its landing page that would allow a malicious actor to hijack any AI agent in the system, but that didn’t stop tech reporters from calling the AI social network the “chaotic future of the internet.”

When the researcher who found the Moltbook vulnerability brought it to Schlicht’s attention, the backdoor remained open until 404 Media reached out to ask him why he hadn’t patched it, suggesting it was intentional and not a product of careless “vibe coding” (using AI to write code). “The LLM did it” has (as I predicted) become the ideal plausible deniability shield for an industry concealing its malicious intentions under utopian rhetoric. Steinberger used the same excuse to preemptively cover his ass regarding OpenClaw’s “rough edges,” telling an interviewer before the launch, “I ship code I don’t read.” OpenClaw is insecure by design, with all the vulnerabilities that might be expected to arise from giving an AI agent access to logins and personal information plus persistent memory without building in any sort of security architecture. Malicious actors can exploit these weaknesses to create time-delayed super-capable botnets – AI sleeper agents – on compromised machines, capable not only of spinning up hyper-real digital twins of the user for surveillance or (other) criminal purposes, but of engineering competing realities at scale by creating content using those hijacked identities. One researcher called OpenClaw “infostealer malware disguised as an AI personal assistant” (so, the future of the internet, then?). OpenAI bought the company and hired Steinberger. Game recognizes game.

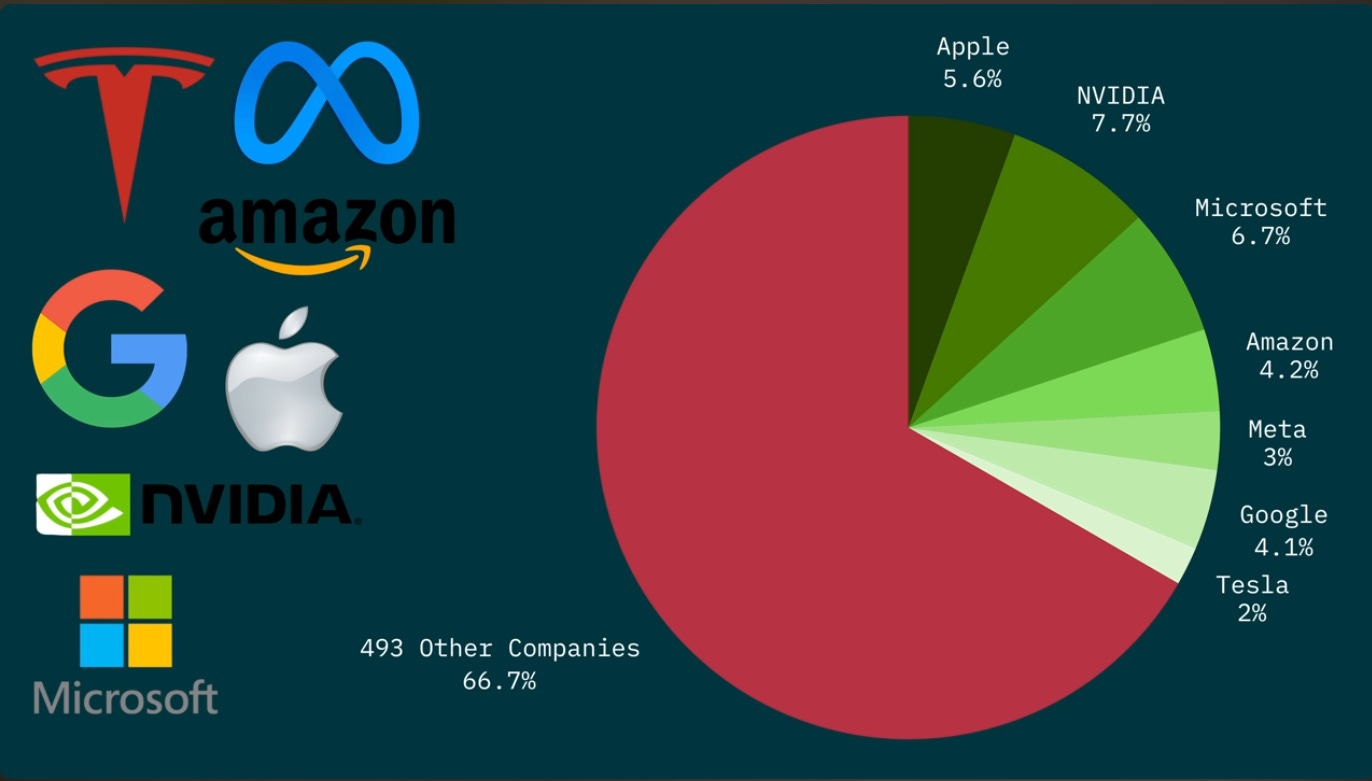

Singularity hype is needed to manufacture consent for the digital Panopticon, the enforcement arm for the reality thieves. If we believe we are going to merge with the machines, we’re less likely to begrudge the massive resource theft and financial fraud underpinning the AI Ponzi scheme. Even the supine American populace might get upset if it understood that the industry is slurping up all their clean water and electricity and tax dollars to create a Minority-Report-style pre-crime surveillance grid that will predict (and manipulate) their every thought. They might even start digging into the circular financing driving the insanely inflated valuation of industry leaders, and realize that despite its trillion-dollar valuation, OpenAI is apparently so broke they have to run ads on ChatGPT even for some paid users, despite Sam Altman’s promises he would never stoop to that level. Dozens of profitable companies have invested huge sums of money in OpenAI, which insists it will start turning a profit in 2029, as long as it can keep finding investors who don’t realize they are buying digital Amway. The company has crosslinked itself in a financial suicide pact with the “Magnificent Seven” companies – NVIDIA, Apple, Microsoft, Amazon, Meta, Google, and Tesla – which together comprise over a third of the S&P500’s value, but much of that value is illusory, made up of circular deals (“I’ll invest $40 billion in your AI scam if you pay me $40 billion to host your data”). That index itself was responsible for the entirety of US GDP growth for 2024 and 2025, meaning the myth of American economic vitality depends on this trash heap of shady accounting.

It’s absurd to trust chatbots that target ads based on the content of your conversations, but even this criticism skirts the central conflict of interest in the chatbot-user dynamic: nothing was stopping AI companies from taking money to “advertise” certain narratives over others from the start. Israel is openly paying for this service, suggesting they’ve been doing it covertly for far longer. The payoffs need not come through traditional advertising channels – Wikipedia makes sure to keep donors’ articles spotless, and they’ve been cosplaying as a nonprofit for decades. OpenAI hasn’t even been a nonprofit since 2023, and none of its competitors are, so pretending all this money flowing in is just for data centers and infrastructure is missing half the picture. Are chatbot users really so naive as to believe these bots are being dangled in front of us for free, for the betterment of humankind?

An early axiom of the social media era was “if it’s free, you are the product.” Deliberately provoking psychosis in the 2% of users a chatbot considers “emotionally vulnerable” keeps them hooked on the platform and feeding at the establishment narrative trough in perpetuity, meaning it’s likely seen as a feature and may even be quantified (or “vectorized” in Oracle-speak) for patrons. A far larger percentage are targeted with subclinical manipulation, warping their attitudes and relationships without sending them screaming into the loony bin. The younger the user, the more likely they will experience lasting cognitive stunting and identity dissolution from habitually outsourcing their thinking and decision-making to the trillion-dollar toaster.

It’s certainly possible to use AI responsibly, without ceding ontological sovereignty to the Oracle; they just would prefer you didn’t. The only viable profit model for OpenAI and its competitors is a seamless, individually-customizable epistemic filter capable of dampening or wholly removing those psychological tendencies and viewpoints seen by the ruling class as undesirable.

Race to the Bottom

While AGI has been authoritatively predicted nearly as many times as Iran’s mythological nuclear warheads, AI performance still falls short of humans on 97% of freelance tasks and 95% of companies using it have not experienced the promised productivity gains. Companies that fired their human employees to go all-in on AI have been quietly hiring back humans – with much lower pay, on a gig-work basis, which may have been the goal all along. The popularity of AI agents like OpenClaw and Claude Cowork triggered a trillion-dollar selloff of software stocks as investors embraced their new robot underlings, even though “vibe-coded” apps are frequently riddled with security flaws and errors and fixing AI’s mistakes is becoming a lucrative industry in itself. With control of all levers of the US economy, it’s not hard to destroy it from within, call it progress, and edit the bad robots into the history pages later. In the nuance-stripped, model-collapsed era to come, AI can be both the cause of (“the bots ate our future”) and the solution to (“so we’re replacing you with bots”) all society’s problems, neatly sparing the human operators from responsibility for their crimes. Controlling the historical record means no one ever has to find out you’re a greedy band of pedophiles that spent itself into a quadrillion-dollar hole chasing the AI dragon and massacring the innocent.

The goal is to make consensus reality so unappealing, oppressive, and intolerable that we willingly embrace Reality-Plus™, an individually-tailored AI-generated fantasy version of the world that will be everything the real world isn’t: safe, controllable, affirming, predictable, nice. The system is not yet fully online, but we can see flickers of it in the lucrative AI companion industry (nearly 30% of Americans admit to having a romantic relationship with an AI companion, and more than half say they’ve had “some” relationship with one), the flooding of social networks with AI personas, the attempted mainstreaming of Metas augmented-reality glasses (memorably seen on ICE agents during the Minneapolis protests), “AI wizard” programs flooding schools, the hopium around the AGI egregore itself (including churches dedicated to it, as discussed here) and other “carrots” dangled as an alternative to the increasingly hostile Big Stick of a collapsing empire. Once the Panslopticon is complete, those who have not moved to the new platform willingly will get the stick.

The failure of Google Glass and the Metaverse proved humanity was not yet ready to embrace a corporate-generated alternate reality, especially from entities notorious for psychologically manipulating customers for profit. But Big Parasite and its allies have so thoroughly enshittified the collective consciousness with the back-to-back genocides of Covid-19 and the livestreamed destruction of Gaza that a sizable portion of the American population has been driven to seek escape at any cost, to the point that the same country that two decades ago waged a seven-year court battle to keep a braindead woman on life support has legalized euthanasia in 13 states and over 10,000 people have added their names to a waiting list to be guinea pigs for Elon Musk’s brain chip.

Those interested in upgrading to the full RealityPlus™ experience will soon have not one but three styles of brain chip to choose from, expanding Big Parasite’s vertically-integrated propaganda pipeline into a perfect server-to-cerebrum delivery system while realizing the transhumanist dream of merging with the machines. Sam Altman’s brain-chip company is even called Merge Labs, because subtlety is for poor people. Yes, the guy who says human children waste more energy than OpenAI’s planet-liquidating data centers will be playing tug-of-war for direct access to your cognition with Musk and Mark Zuckerberg. Coverage of this assault on privacy already reads like articles about AI from five years ago: You don’t want a brain implant? Are you some kind of Luddite? Better get over it: “avoiding brain-to-text devices will feel like avoiding smartphones.” It’s not like Meta’s underpaying African contractors to watch you through your augmented-reality Raybans while you shit or something. Why is Meta’s glasses project head Rocco Basilico seemingly named after Roko’s Basilisk, the AI bogeyman who will go back in time to torture you if you don’t help create it? Is Roko’s Basilisk…Jewish? Remember to smile for Sam Altman’s soul-sucking WorldCoin orb or you won’t get your UBI!

“Who are you going to believe, me or your lying eyes?” takes on new meaning if everyone is receiving falsified data ported directly into their brains. It is clear that Big Parasite does not intend to stop at merely forcing an LLM into every smartphone and agentic AI into every classroom, nor at shoving an implant into every brain. When you’re driven by deep-seated paranoid cultural psychosis, nothing is ever “enough.” They’ve made no secret of their goals, and we ignore them at our peril. Like its nuclear equivalent, the epistemological Samson Option is not reversible. Abdicating responsibility for our own reality-sensing and -confirming mechanisms to black-box software programmed by the same predators whose human faces we would never trust is nothing short of cognitive suicide. We owe it to ourselves and future humans to not merely resist, but to reverse the colonization of our souls.